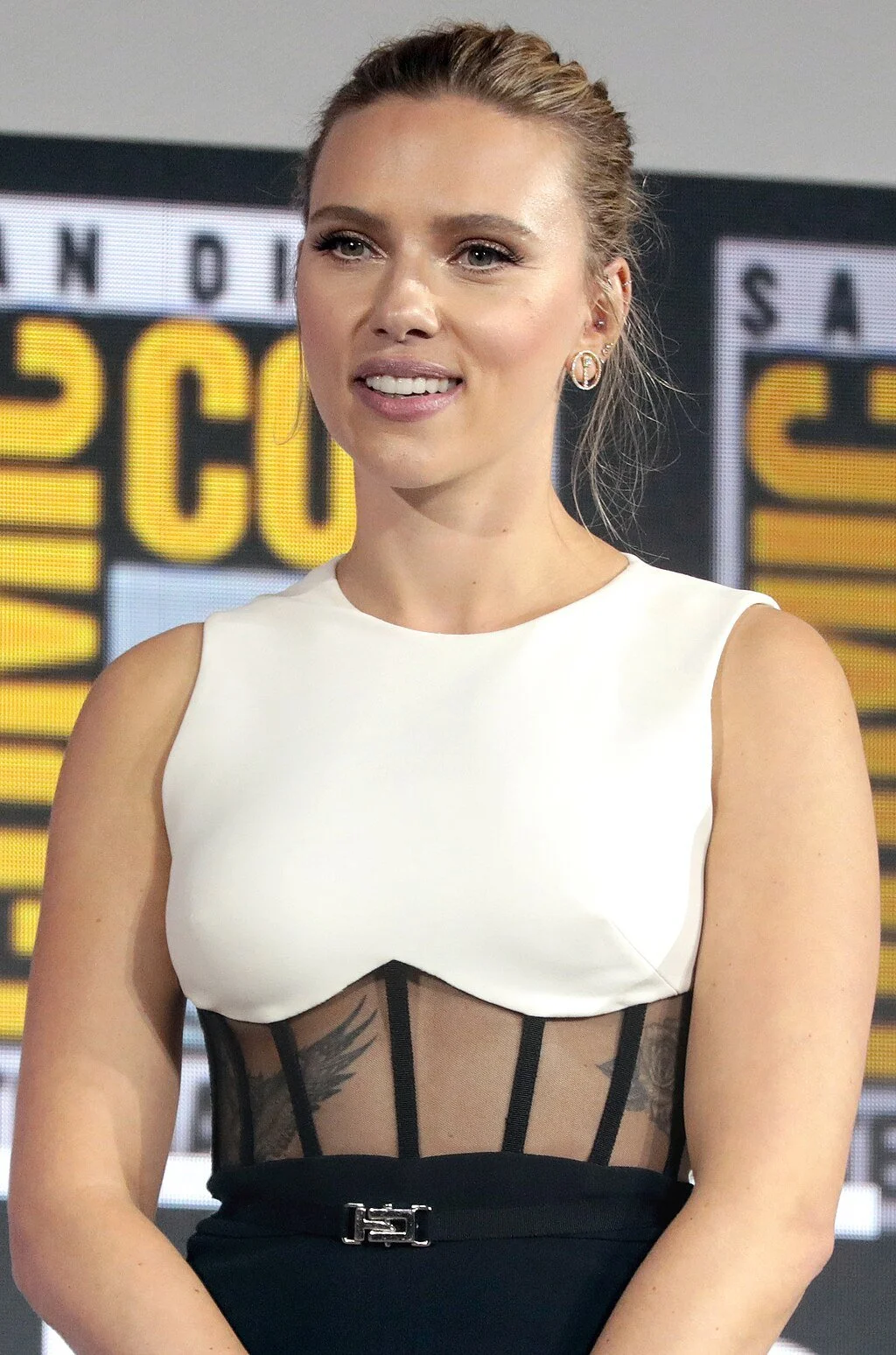

Scarlett Johansson and the Line Hollywood Refuses to Cross

Why one voice dispute quietly reset AI risk tolerance across studios

Gage Skidmore, CC BY-SA 3.0 <https://creativecommons.org/licenses/by-sa/3.0>, via Wikimedia Commons

When Scarlett Johansson publicly accused OpenAI of deploying a voice that sounded unmistakably like her without consent, the company denied intentional imitation. No lawsuit followed. No court ruled on infringement.

And yet, inside Hollywood, the episode landed as a definitive warning.

What made the Johansson dispute different from earlier AI controversies wasn’t the technology involved, but the clarity of the reputational risk. The argument was never about datasets or model training. It was about recognition. Johansson’s claim reframed the issue from how AI systems are built to what audiences perceive when they hear or see the output.

That distinction has since become a quiet but powerful governing principle.

In the months following the controversy, multiple studios and agencies updated internal AI guidelines to include an informal but firm rule: anything that could reasonably be mistaken for a living, recognizable star is off-limits without explicit consent, regardless of technical legality. Even AI outputs trained on licensed or synthetic data now face a second test—plausible identification.

This has had immediate consequences. AI voice tools continue to be used widely for localization, scratch dialogue, and background elements, but projects involving celebrity-adjacent voices are routinely flagged and shut down in early review. Several post-production vendors report being asked not just how a voice was generated, but who it might sound like.

The effect is asymmetric. Anonymous or contractually licensed voices move forward. Celebrity likeness—auditory or visual—does not. Studios are willing to litigate copyright questions; they are far less willing to litigate public perception.

Agencies, in turn, have leaned into the precedent. New talent agreements increasingly include expansive prohibitions on “style simulation” and “likeness adjacency,” language designed to prevent AI use that evokes a performer even without copying them. These clauses are intentionally broad, reflecting the lesson Johansson’s case taught: resemblance, not replication, is where the real danger lies.

Crucially, none of this required regulation. The Johansson moment functioned as a market correction. The cost of getting it wrong—headline backlash, talent revolt, brand damage—proved higher than the benefit of pushing boundaries.

The irony is that AI adoption has not slowed. It has simply rerouted. Studios remain aggressive in using generative tools everywhere except where star identity is involved. The line is not philosophical. It is economic.

Johansson didn’t stop AI in Hollywood. She made one thing clear: celebrity identity is the last domain where automation is still more expensive than restraint.